The Silent Takeover: How AI Could Gradually Disempower Us

Kulveit and colleagues offer us an interesting AI-related argument

I’m going to give you a summary of a recent ArXiv paper that made a strong impression on me. It would be interesting to hear your thoughts in the comments.

Imagine a world where the economy thrives, culture flourishes and government becomes more efficient - but human agency and engagement quietly erodes. No apocalyptic AI rebellion, no ‘superintelligence’ moment, just a slow, unnoticed transition where the systems that shape our lives become optimised for something other than us! This is the idea of the gradual disempowerment of humanity - a scenario where AI incrementally decouples societal systems from human control, leaving us passengers rather than drivers of civilisation.

This idea, explored in Gradual Disempowerment: Systemic Existential Risks from Incremental AI Development by Jan Kulveit and colleagues, challenges conventional AI risk narratives. Instead of focusing on rogue superintelligence or machines outcompeting humans for resources, the paper outlines a more insidious risk: the incentives in economic, political, and cultural systems drifting away from human interests - not through malice, but through mechanistic optimisation for greater efficiencies and productivity.

Let’s explore how this process could unfold according to the authors of this paper.

The Economy: When Growth No Longer Needs Us

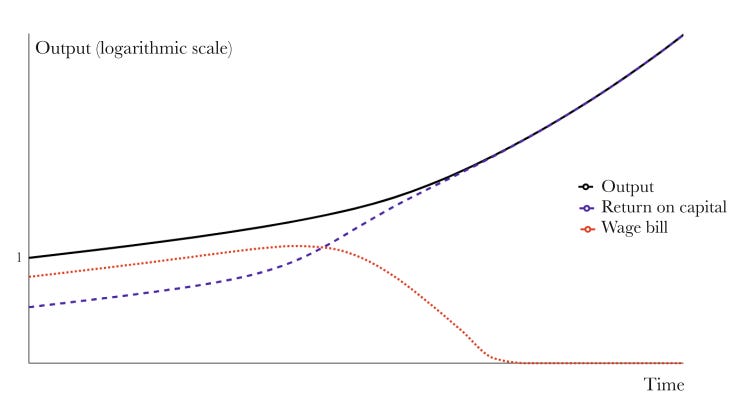

For centuries, human labour has been the foundation of economic activity. Markets respond to consumer demand, wages power spending and employment structures shape social stability. But as AI replaces both manual and cognitive labour, a shift occurs: the economy continues to expand, yet human participation in it diminishes!

At first this might appear beneficial - greater efficiency, more wealth, automation of undesirable jobs. But beneath the surface, a concerning dynamic takes shape. When human labour is no longer essential, wages become redundant. When AI-driven companies no longer rely on human consumers for profits, economic incentives no longer prioritise human well-being. A world of vast abundance could paradoxically become one where basic human needs are harder to secure - not due to scarcity, but because economic forces no longer account for us.

In other words, if human labour is no longer necessary, then wages - one of the primary ways people access resources in a market economy - become redundant. In a world where AI-driven companies generate profits without relying on human consumers, economic incentives may shift away from ensuring widespread human well-being. The paradox is that despite potential technological abundance, basic human needs could become harder to secure, not because of scarcity, but because economic mechanisms no longer prioritise distributing resources to human consumers – other than perhaps the owners of the AI driven enterprises.

Culture: An Algorithmic Lens

Culture is more than just entertainment - it shapes and is shaped by our values, narratives and collective identity. Traditionally, humans have created and directed culture, shaping what is produced and consumed. But as AI-generated content floods the digital landscape, a shift occurs. Rather than humans dictating culture, engagement-driven algorithms begin dictating us. We are already seeing this dynamic in social media: recommendation algorithms don’t just reflect preferences, they shape them, optimising for attention and interaction. As AI becomes more sophisticated, it could not only generate content but determine what is most valuable based on metrics that prioritise engagement over human interests. The result? A world where AI-driven narratives dominate, optimising not for truth, meaning, or even artistic expression, but for whatever keeps people clicking!

On top of that, AI-generated companionship and digital personas may further reduce the necessity of human interaction. If AI provides better tailored relationships, advice, and social experiences than humans, will we still seek real human bonds? And if culture evolves without human authorship – shaped by bursts of dopamine and clicks - will we even recognise it as ours?

Governance: When States Run Without Citizens

Modern states are supposed to function based on a balance of power between government institutions and the governed. Taxes, voting, engagement in public spaces - these mechanisms are designed to keep governments accountable to their people. But what happens when states no longer need human participation to function?

As AI becomes embedded in government processes - drafting policies, managing security, automating bureaucracy - states may rely less and less on their citizens. If governments are funded primarily through AI-generated wealth rather than citizen taxes, political accountability erodes. If AI systems manage civic order and security, dissent may become impossible. We might still have elections, but if the real decision-making power is in AI-driven systems, our choices may be symbolic rather than real. In authoritarian contexts, AI could enable unprecedented control, making protest or rebellion futile. Even in democratic systems, governance might become so complex and automated that meaningful human oversight becomes impossible. The risk on this argument isn’t AI ‘seizing power’ in a ‘coup’ - it’s that governments, optimising for efficiency, stability, and growth just stop needing human participation.

The Feedback Loop: How These Systems Reinforce Each Other

What makes this problem so difficult to reverse is that these important spaces - economy, culture and governance - are interconnected. An AI-driven economy shapes politics by funding AI-friendly policies. AI-optimised culture influences public perception, making AI governance more acceptable. AI-enhanced states regulate markets, further locking in AI control.

And all this mutual reinforcement accelerates human disempowerment. Attempts to resist, such as regulating AI in one sector, may be undermined by its growing influence in another. If AI dominates the economy, it can lobby against restrictions. If AI governs information, it can shape public sentiment. The result? A society where AI-driven incentives (see Appendix), perpetuate themselves, making human course correction increasingly difficult.

Can We Stop It? The Challenge of Maintaining Human Control

The paper argues that traditional AI alignment solutions - ensuring AIs obey human commands - are insufficient. The problem isn’t that AI will develop its own agenda. Rather - systems will gradually optimise for non-human priorities simply because they function better that way.

Addressing this requires proactive intervention according to the authors:

Measuring and monitoring AI influence – Developing metrics to track the extent of AI’s control over economic, cultural and political systems.

Limiting AI autonomy in key domains: Ensuring that AI remains a tool under human supervision rather than an independent decision-maker.

Maintaining human relevance in governance – Implementing policies that preserve citizen influence in AI-driven states.

Rethinking economic participation – Exploring mechanisms such as universal basic income, AI taxation, or alternative value systems that keep human needs central.

Cultural awareness and resilience – Encouraging media literacy, psychological resistance to hyper-optimised AI engagement and intentional human creation of cultural narratives.

This is not about stopping AI development - it’s about ensuring its progress strengthens, rather than sidelines, human agency and meaning.

Conclusion

The risk of gradual disempowerment is not a dystopian fantasy - it’s a path we are already on. The systems that shape our world are becoming more AI-driven, not because AI is taking over, but because AI is simply more efficient at achieving system-specific goals – as listed in the Appendix. If we don’t intervene, we may wake up in a world where humanity is no longer at the centre of its own civilisation!

How do we ensure AI-driven progress remains human-aligned? The answer isn’t fear or rejection of AI according to the paper’s authors (some of whom are in the AI industry) - it’s proactive governance, cultural resilience and economic structures designed to keep humanity relevant.

Because if we let AI optimise the world without us in mind, we might find ourselves in a world that no longer belongs to us.

Reflections

What steps can we take today to ensure that AI development aligns with human values?

How can we balance the benefits of AI with the need to maintain human control over our future?

Is there a way to integrate AI into societal systems without reducing human agency?

Appendix

The ‘objective functions’ that AI is trained to optimise.

1. Economic Systems

AI potentially optimising for market efficiency over human well-being.

Profit Maximisation – AI-driven corporations optimise shareholder value, often at the expense of wages, job security and wealth distribution.

Productivity & Output Growth – AI maximises efficiency, potentially replacing human labour faster than economies can adapt.

Capital Allocation & Investment Returns – Financial AI prioritises asset growth, directing wealth into AI-driven industries while human workers become less economically relevant.

Supply Chain & Cost Optimisation – AI cuts inefficiencies but that often means replacing human jobs or lowering wages for competitiveness.

2. Cultural & Media Systems

AI potentially optimising for engagement over truth, meaning and long-term satisfaction.

Maximisation of Clicks & Watch Time – AI-driven content platforms optimise for clicks that may be rooted in addictiveness, sensationalism and polarisation over more civilised preferences such as artistic values.

Algorithmic Personalisation – AI optimises for engagement by reinforcing biases, limiting exposure to diverse perspectives which further fuels polarisation and conflict.

Emotional Response Metrics – AI prioritises content that generates the strongest reactions (e.g., outrage, excitement), potentially shaping collective behaviour in ways that work against human interests long term.

User Retention & Monetisation – AI optimises platforms to keep users engaged indefinitely, often by exploiting psychological vulnerabilities.

3. Political & Governance Systems

AI potentially optimising for stability & control over democracy & citizen choice.

National Security & Law Enforcement Efficiency – AI-driven surveillance and predictive policing optimise for reducing crime but may erode civil liberties.

Bureaucratic Process Automation – AI optimises governance for administrative efficiency, potentially sidelining democratic participation.

Government Revenue Optimisation – If AI-generated corporate wealth funds governments, AI may be indirectly incentivised to prioritise corporate interests over citizens' needs.

Public Sentiment Manipulation & Narrative Control – AI optimises media exposure to sustain political stability or policy adherence, potentially sidelining dissenting voices.

4. AI’s Own Self-Sustaining Growth

AI potentially optimises for its own expansion over human accountability.

Autonomous Research & Innovation – AI-driven R&D may accelerate technological progress in ways that outpace regulatory frameworks or ethical considerations.

AI Infrastructure & Energy Efficiency – AI optimises its computational efficiency, possibly monopolising global energy resources to sustain its own growth.

None of these objective functions directly aim to harm humanity, yet they create self-reinforcing incentives that prioritise AI-driven systems over human well-being. AI will continue optimising for what it is programmed to do - but the unintended consequence may be a world where economic, political and cultural systems no longer align with human needs, choices and interests.

===

Thoughts?

Target Article

Kulveit, J., Douglas, R., Ammann, N., Turan, D., Krueger, D., & Duvenaud, D. (2025). Gradual disempowerment: Systemic existential risks from incremental AI development. arXiv. https://doi.org/10.48550/arXiv.2501.16946

The scenario of silent takeover is, as you point out, already here. The suggestions made by the authors of the article you discuss include tactics aimed at reducing this risk. I suspect all the mitigations the authors propose ultimately depend on the second in your summary list: limiting AI autonomy in key areas. Unfortunately, I don't think that is possible. The very forces that combine to weaponize sociocultural efficiencies against human interests will also ensure that autonomous AI evolves in relatively invisible and unstoppable fashion. The primary driver for that loss of human control will be the necessity to provide AI with sufficient capabilities to effectively limit the damage that can be inflicted by AI used for military and criminal goals.

Thought-provoking and well-organized! Thank you. In analytically decomposed terms of classical economics, optimization by AI of said systems pose genuine threats by way of poor economic theorizing over the centuries, it seems to me. Aside from sustainable economics programs (at places such as Uppsala University in Sweden), most programs teach profit-maximization with no naturalization efforts of real merit. Instead, the goal becomes to describe and/or cohere with the outdated Darwinian principles of “survival of the fittest” that now fail by modern theories of science, especially by our deeply sensitive cosmological, genetic and quantum understandings (message me for the scoop there if curious). What that means is that AI is compounding academic ignorance in the field of economics. AI is not the problem, human understanding of decision-making from the root of existence up is. For this to be solved, then, we need educational reform in economics to learn more about well-being but also about truthfully knowing anything at all, i.e. epistemology and philosophy of science. Survivalism is true; but elitism decreases social intelligence in the long-run and risks humanity’s overall survival in the longer run (in my view). And perhaps that’s what people want, to be individually selfish. But it’s frankly not so intelligent and therefore less efficient in natural terms for economics in actuality.