Why Relational N-Back Is Different — And Why the Surface Format Matters

On a new paper in Brain Topography, what it means for training fluid intelligence

There is a recurring problem in cognitive training research. Something works in the lab — working memory improves, reaction times drop, participants feel sharper — and then the effect evaporates the moment you test something even slightly different. Far transfer, the holy grail of cognitive training, stays stubbornly out of reach.

A recently published study in Brain Topography (Wang, Sun & Xiao, 2025) does not solve this problem. But it does something more interesting: it points, with neurological precision, at why some training designs are better positioned than others to support genuine transfer. And it does so in a way that has direct implications for how we should think about training relational intelligence specifically.

What the Study Did

The researchers ran a randomised controlled experiment with 57 participants split into a relational integration training group and an active control group doing a general knowledge quiz. Training ran for one month. Before and after, participants completed the Sandia Matrices — a fluid intelligence measure — and had their resting EEG recorded.

The training task was not a standard n-back. It was a relational integration task that combined n-back-style temporal comparison with numerical relational reasoning. Participants had to judge whether the relationship between successive numbers — not the numbers themselves — was the same as an earlier relationship. In the 1-back version, they compared a current relation with the previous one. In the 2-back version, they held the comparison across a longer temporal span.

The unit being tracked was not “is this number the same?” but “is the type of change the same?” Same direction of increase. Same magnitude of shift. Same ordinal pattern. The memory load was carried not by items but by relations between items.

This is a subtle but important distinction. Standard n-back trains whether something matches a stored item. Relational n-back trains whether a structural property matches a stored structural property. These are not the same cognitive operation.

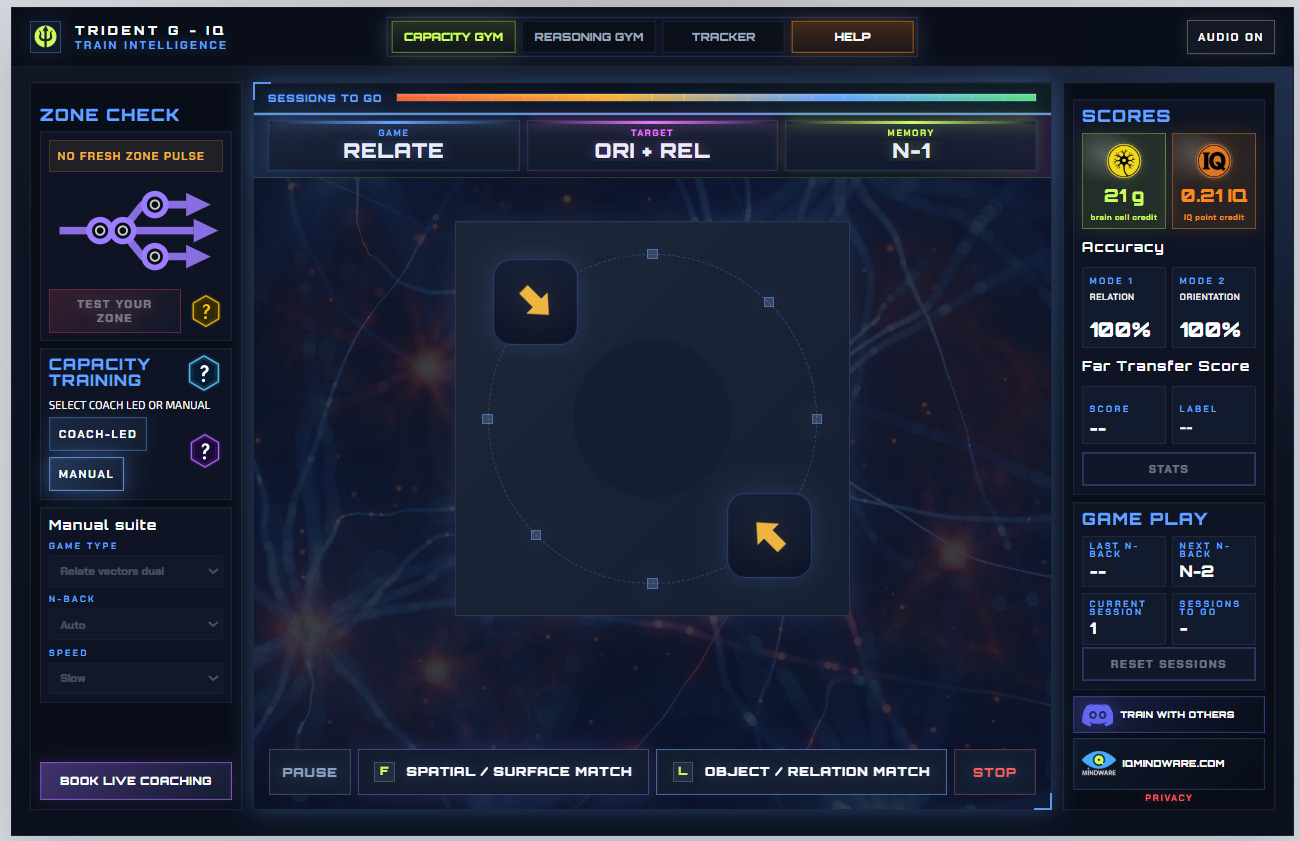

This is exactly the same logic behind my relational n-back training games in the IQMindware.com app.

What the Brain Data Showed

The most striking findings came not from the behavioural tests but from brain scans taken while participants were simply resting — not doing any task at all.

The brain is never truly idle. Even at rest, it flickers through a series of brief electrical configurations, each lasting a fraction of a second, cycling between different modes of large-scale network activity. One of these resting configurations is associated with the frontoparietal network, the distributed brain system most consistently linked to fluid intelligence and the ability to reason with novel problems.

After a month of relational integration training, the trained group spent significantly more time in this frontoparietal configuration at rest, and transitioned into it more readily. Importantly, the more time a participant spent in this configuration, the faster their relational reasoning performance tended to be. The brain, even when not doing anything, had reorganised itself toward a mode associated with higher-level reasoning.

The behavioural results were more modest. Both groups improved on the fluid intelligence test and became faster over the month, but without a clean separation between them. The neural changes were clearer than the performance changes It means the study is better read as mechanistic evidence that this type of training touches the right neural infrastructure for IQ gains.

Why This Matters for Transfer

Here is where the study’s implications reach beyond its own findings.

The reason working memory training generally fails to produce far transfer is now fairly well understood. Standard n-back trains a specific input-output ‘policy’ for a specific surface format: remember whether this letter, or this position, appeared two steps ago. The brain gets good at the policy. The policy does not generalise because it is surface-bound — it is a compiled strategy for a particular kind of stimulus sequence, not a general relational integration capacity.

The relational n-back design forces something different. The trained operation is not “remember item X.” It is “preserve the structural invariant — the type of relational change — across temporal positions.” The surface still matters. The study used numerical relations. But the unit of representation that gets strengthened is at least one level more abstract than the item itself.

This is consistent with what the EEG data showed: changes in a resting-state network associated with general relational processing, not just faster number comparison. The frontoparietal network is not a number-processing network. It is a domain-general relational integration network — one of the systems most strongly associated with fluid intelligence across tasks, domains, and populations.

The implication is careful but real: if you want training to transfer, you need the trained unit of representation to be at the right level of abstraction. Training items does not transfer. Training item policies does not transfer reliably. Training relational operations across items — tracking the structure of change, not just the content — may be better positioned to transfer, because the relational operation is what fluid intelligence actually runs on.

The Surface Stimuli Problem

But here the study points to something it did not itself test, and which I think is the crucial next design question.

The Wang et al. training used numerical relational change as its training modality throughout. Participants became better at tracking whether numerical differences, ordinal shifts, and magnitude patterns repeated across temporal positions. This is a genuine relational operation. But it is a relational operation embedded in one representational format.

A participant who trains for 30 days on numerical relational n-back will inevitably build some surface-specific expertise alongside the general relational capacity. They will get good at reading numerical change efficiently, parsing numerical patterns quickly, and holding numerical relations in working memory. These surface skills are not the target — they are a side-effect. And they potentially contaminate the transfer measurement.

The more interesting design question which my research is based on is: what happens if you keep the same relational operation but change the surface it runs over?

Numerical difference n-back: is the change between these two numbers the same as the change n steps ago?

Spatial vector n-back: is the direction and magnitude of this spatial displacement the same as the displacement n steps ago?

Symbolic relation n-back: does this icon-pair relationship (larger/smaller, same/different category, left/right of) match the relationship n steps ago?

Object transformation n-back: is this rotation or reflection the same transformation as n steps ago?

Same relational engine. Different representational modes. A training programme that cycles through multiple modalities while holding the relational operation constant is asking something more demanding: not just “can you track relations over time?” but “can you track the same abstract relation when it appears in a completely different representational medium?”

The hypothesis built into my apps is that this cross-format training reduces surface-specific skill accumulation and forces the trained operation to remain genuinely abstract. A person who has tracked numerical relations cannot rely on their numerical fluency when the wrapper switches to spatial vectors. They have to re-execute the relational comparison at a higher level of abstraction, which is exactly the level at which fluid intelligence operates.

The Trident-G Reading

Within the Trident-G framework for general adaptive intelligence, this design logic has a direct theoretical home.

The framework distinguishes between two kinds of learned structure: fast crystallised compilations that are domain-specific, and the slower schematic geometry — the genuine cross-domain invariants that reshape future search spaces. Standard training tends to produce the first. What we want is the second. I call this kind of cognitive skill learning crystallised intelligence.

The mechanism

The key mechanism in the framework is the explore-exploit balance - between the two outer forks of the ‘trident’. Far transfer cognitive learning requires the system to operate in what I call the Ψ-band or ‘being in the zone’— a near-critical regime where neither rigid exploitation nor run-away exploration dominates. Training that locks the person into one surface format tends to push them toward premature exploitation: they compile a surface-specific strategy, bank it as skill, and stop exploring the deeper relational structure. The reseearch behind this understanding is found here.

Surface form variation is a mechanism for maintaining learning-for-transfer dynamics throughout the training programme. When the surface changes, the compiled surface-specific strategy is invalidated. The brain has to re-enter the exploratory phase and find the relational invariant again in the new medium. Done well, this means each modality-swap is a small-scale bifurcation event — a forced exploration that, if navigated successfully, deepens the genuine relational representation rather than the surface policy.

The design implication is not to mix surface modes randomly. It is to be deliberate about the sequence: establish the relational operation clearly in one format, confirm the person has it, then swap to a new format with the same underlying structure and measure the swap cost. The person who maintains performance across the surface change has the relational operation at the right level of abstraction. The person who collapses has the surface-specific policy but not the underlying structure.

This is a far transfer design principle underlying my own work on cognitive interventions.

What Good Relational Training Looks Like in Practice

The practical design implications, drawn carefully from this evidence:

The trained unit should be a relation, not an item. The participant should be tracking whether the structure of change matches across temporal positions — same direction, same magnitude, same ordinal rank, same pair relationship — not whether a stimulus reappeared.

Difficulty should scale through relational complexity before n-level. Increasing the number of simultaneously tracked relational dimensions (dual relation tracking), introducing lures that match on one dimension but not another, and reducing response time are more likely to deepen the relational operation than simply pushing to 3-back or 4-back on the same surface.

And after training a relational operation in one format, the wrapper should switch while the operation stays constant. The swap cost — the performance drop after the wrapper change — is informative data. Recovery from swap cost across training is a signal that the relational operation is becoming more abstract.

This paper supports support a model in which transfer to untrained n-back tasks (different surface modality) gates further transfer to Matrix Reasoning (IQ) and working-memory outcomes. In other words, it is not merely “people trained n-back and then got better IQ scroes”; rather, far-transfer effects were linked to whether participants also improved on a different, untrained n-back task.

And consolidation to hardwire your learning matters. Short, high-quality relational sessions repeated across days are more likely to support the slow schematic consolidation that produces genuine structural learning than long grinding sessions. The slow pathway that installs the IQ-supporting ‘cortical geometry’ requires adequate rest and sleep. This is not a peripheral concern; it is a direct architectural requirement for the kind of learning that transfers.

Feedback should track relational accuracy, not just response correctness. Knowing you got 78% and your n-back level does not tell you whether you are tracking genuine relational structure or running a fast heuristic on surface features. Feedback that separates relation match accuracy and that tracks performance stability across surface modality swaps changes, gives the brain much more useful information. That’s what the ‘far transfer’ metric gives you in the training apps I am developing.

The Larger Point

The history of cognitive training research is, in significant part, a history of training the wrong thing at the wrong level of abstraction and then being surprised that the training does not generalise. Working memory training produced near-transfer — people got better at the trained task and similar tasks — but failed to produce far transfer because the trained operation was too surface-bound.

Relational integration training is not a guaranteed solution to this. But it is a more principled approach, because it targets the right level: the operation that fluid intelligence actually runs on, which is relation tracking and relation integration across representational dimensions.

The Wang et al. findings, interpreted carefully, suggest this approach has a mechanistic basis — it leaves a real signature in the resting-state dynamics of a network known to support fluid reasoning.

The goal is not a better brain-training game. It is a training design that respects what general intelligence actually is: not faster item processing, not better item memory, but the capacity to find and hold the invariant structure underneath the shifting surface.

Ashton Smith, M.. (2025, December). Trident G: A computational-level theory of intelligent life [Preprint]. ResearchGate. https://doi.org/10.13140/RG.2.2.24468.97925

Wang, Z., Sun, T. & Xiao, F. (2025). Relational Integration Training Modulated the Frontoparietal Network for Fluid Intelligence: An EEG Microstates Study. Brain Topography, 38, 24. https://doi.org/10.1007/s10548-024-01099-3